How to Build an AI Agent: The Complete Build vs Buy Guide for Technical Founders (2026)

- Riya Thambiraj

- Artificial Intelligence

- Last updated on

Key Takeaways

AI agents are moving from experiments to core infrastructure and can handle autonomous, goal-driven tasks across multiple steps.

A useful AI agent needs three things working together: natural language understanding, a decision engine, and deep integrations with your systems.

AI agents make sense when you have high-volume repetitive interactions, at least 80 percent predictable workflows, and clear metrics to measure impact and ROI.

Platforms are faster and cheaper to start, but have hard limits on customization, while custom builds cost more upfront but pay off at scale and for strategic, unique use cases.

A hybrid path works best for most teams: validate on a platform for a few months, then build a custom agent using real usage data and proven workflows.

Production-grade agents rely on a layered architecture that includes an LLM, orchestration, memory, tools and integrations, and strong guardrails for safety and reliability.

Real-world deployments at Klarna, Morgan Stanley, and Shopify show that agents deliver faster resolutions, higher efficiency, and strong adoption when they connect to real data and hand off complex cases to humans.

The main failure modes are poor prompts, weak knowledge access, hard integrations, and rising model costs, all of which can be managed with better design, RAG, tooling, and model selection.

The key decision is not whether to use AI agents but whether to choose platform, custom, or hybrid based on volume, budget, timeline, in-house dev resources, and how strategically important the agent is to your business.

When Google's CEO, Sundar Pichai suggests AI could eventually perform C-suite duties more efficiently than humans, and Meta's CEO, Mark Zuckerberg is actively testing an AI-powered assistant to support day-to-day strategic decisions, one conclusion becomes unavoidable: AI agents are no longer experimental. They are becoming infrastructure.

But here is what those headlines miss. The question for technical founders is not whether to adopt AI agents. It is how to adopt them without wasting six months and five figures discovering that a platform cannot do what you need, or that you overbuild when a platform would have worked perfectly well.

Before diving into the details, let’s clarify who this guide is designed to help.

Who Should Read This Guide

This guide is for technical and business leaders evaluating AI agents to automate workflows, reduce manual effort, and improve response quality at scale. It is especially relevant for technical founders and CTOs who need clarity on build vs buy decisions, architecture, timelines, and realistic MVP costs.

Product and operations leaders will find it useful for reducing repetitive workloads, improving consistency, and driving efficiency without major structural changes. Customer experience leaders can use it to manage rising ticket volumes through AI-first resolution with intelligent escalation to human teams.

Enterprise and IT directors will benefit from guidance on evaluating vendors, integration requirements, security, compliance, and long-term cost trade-offs. Revenue and growth leaders can better understand the impact on unit economics, from cost reduction to new revenue opportunities. The guide is also valuable for investors and advisors assessing scalability, validating build vs buy decisions, and estimating realistic timelines for ROI.

What You'll Discover in This Guide

This guide covers the full terrain: how to build an AI agent, when to buy instead, what custom AI agent development actually costs, how to choose an AI agent platform, and the technical architecture decisions that determine whether your agent delivers real value or becomes an expensive answering machine.

What Is an AI Agent? Beyond the Marketing Hype

An AI agent is software that perceives its environment, makes decisions, and takes actions to achieve specific goals without constant human supervision. Unlike traditional automation built on if-then rules, or basic chatbots constrained to scripted responses, AI agents understand context, make decisions based on goals, and chain multi-step tasks together autonomously.

The distinction matters because it defines the entire value proposition.

| Basic Chatbot | AI Agent |

|---|---|

| Rules-Based | Goal-Driven |

| Follows predefined scripts | Understands intent in natural language |

| User navigates menus | Agent navigates on the user's behalf |

| No real decision-making | Decides which actions to take |

| Fails on unexpected phrasing | Handles variations and ambiguity |

| Cannot take direct action | Updates databases, sends emails, triggers workflows |

If you're earlier in the process and evaluating chatbot development as a starting point, it's a valid option for simpler, scripted use cases, just understand what you'll outgrow.

Consider the practical difference. A user writes: 'Can you move my Friday delivery to next week Wednesday?' A chatbot presents a menu. An AI agent checks the calendar, finds the Wednesday slot, updates the system, and confirms the change in a single response. The agent does the navigation work. That is autonomy.

We build production-grade AI agents — end to end From architecture design to deployment and ongoing support, we handle the full build. Custom agents tailored to your workflows, integrated with your systems, and built to scale. Explore our AI agent services

The Three Core Capabilities

Every useful AI agent combines three components. Remove any one of them and what you have is, in practice, an expensive answering machine.

Natural Language Understanding extracts meaning from messy human input. 'I want to cancel,' 'Can I stop this?' and 'Forget it, I'm done' are the same instruction. Modern large language models handle these variations reliably.

A Decision Engine connects that understanding to action. It uses business logic, workflows, and retrieval-augmented generation to determine what happens next. The logic might read: check order age, if under thirty days auto-approve the refund, if over thirty days escalate to a human agent.

An Integration Layer connects the agent to your systems and allows it to act. It queries your CRM, updates your database, sends confirmation emails, triggers workflows. Without integrations, the agent can talk but cannot do.

When AI Agents Make Sense: The ROI Reality Check

Eighty-five percent of enterprises and seventy-eight percent of SMBs plan to adopt AI agents in 2026. Adoption for adoption's sake, however, is expensive theater. The decision should begin with a straightforward three-question test.

The Three-Question Litmus Test

Do you have high-volume, repetitive interactions?

AI agents thrive on scale. The break-even point typically sits around 300 to 500 monthly interactions where a human spends three to five minutes each. If your team handles 500 or more customer inquiries per month, dozens of order-status checks daily, or frequent appointment rescheduling requests, you are in AI agent territory.

Is 80% of the work predictable?

AI agent development delivers maximum value on the long tail of boring work: password resets, order status updates, basic troubleshooting, appointment rescheduling, and FAQs with slight variations. If 80 percent of interactions follow recognizable patterns, automation makes sense. The remaining 20 percent escalates to humans who can focus on genuinely complex, high-value work.

Can you measure the impact?

Without clear success metrics, there is no meaningful ROI calculation. You need a before-and-after story: support tickets reduced by X percent, response time reduced from eight minutes to eight seconds, one FTE reallocated from repetitive queries to strategic work.

ROI Calculation: A Simple Model

Annual cost of manual handling = (monthly tickets x 12) x (average handling time in hours) x (hourly agent cost).

At 1,000 tickets per month, 10 minutes average handling time, and a $25 hourly rate, the annual cost comes to $51,000.

If an AI agent handles 50% of those interactions, that results in $25,500 in direct savings per year.

A prebuilt platform typically costs $5,000-$15,000 per year, which means it can deliver positive ROI within the first year.

A custom-built solution may cost $30,000-$60,000 upfront, plus $5,000-$10,000 annually in maintenance. While the initial investment is higher, it can break even in 1.5-2.5 years and may unlock greater long-term savings depending on how deeply it integrates and automates workflows.

When to Skip AI Agents Entirely

The honest answer is that AI agents are not always the right tool. Avoid them if your monthly interaction volume is below 200 (the ROI simply does not materialize), if every interaction is unique and requires creative or strategic judgment, if your systems are too fragmented for reasonable integration, or if you are in a regulatory environment where automation carries legal risk. AI amplifies good processes. It does not create them.

Still deciding between agents, chatbots, or a broader AI product? Our AI application development guide maps out where each fits.

Build vs Buy: The Strategic Decision Framework

This is the most consequential decision in AI agent development. Choose wrong, and you either waste money on a platform that cannot do what you need, overbuild custom when a platform would have worked fine, or end up rebuilding six months later because you hit hard limitations.

The Platform Path (Buy)

Platforms give you pre-built AI agent infrastructure: no-code or low-code setup, standard integrations, managed hosting, and a time-to-value measured in weeks rather than months. The trade-off is control. You get their conversation design, their UX, their roadmap, and their data policy. Customization hits walls quickly, and those walls tend to appear precisely when your use case gets interesting.

| Platform | Best For | Cost Range | Key Limitation |

|---|---|---|---|

| Zapier / Make.com | Simple automation + AI | $20–$300/mo | No complex multi-turn conversations |

| Intercom Fin | Customer support on Intercom | $39/mo + $0.99/resolution | Support use case only |

| Microsoft Copilot Studio | Microsoft-ecosystem enterprises | $200/agent/mo | Locked into Microsoft stack |

| Google Dialogflow | Developer-friendly mid-tier | $0.002/text request | Advanced workflows require custom code |

| Voiceflow | Prototyping and design | $50–$600/mo | Better as design tool than production |

The Custom Build Path

Custom AI agent development gives you full control over conversation design, integrations, data ownership, and the roadmap. It also means significant upfront investment, a timeline measured in months rather than weeks, and ongoing maintenance responsibility.

The cost range for custom builds in 2026 runs from $20,000 to $60,000 for a focused single-domain MVP up to $80,000 to $180,000 or more for complex multi-domain agents. Maintenance adds $5,000 to $15,000 per year. Development timeline: 12 to 16 weeks for a well-scoped MVP.

The Hybrid Approach (Often Best)

The most pragmatic path for most teams is a two-phase hybrid. Use a platform for the first two to three months to validate demand, refine use cases, and gather real conversation data.

Then build custom using those insights. You get fast validation with low risk, a data-driven foundation for the custom build, and a team that already understands what users actually need rather than what they assumed users would need.

For most teams in the evaluation phase, AI MVP development is scoped to one use case, one integration and one measurable outcome. This is a faster path to proof than either a full platform rollout or a complete custom build.

-> The best AI agents are built iteratively. Version 1 will be imperfect. Launch, learn, and improve.

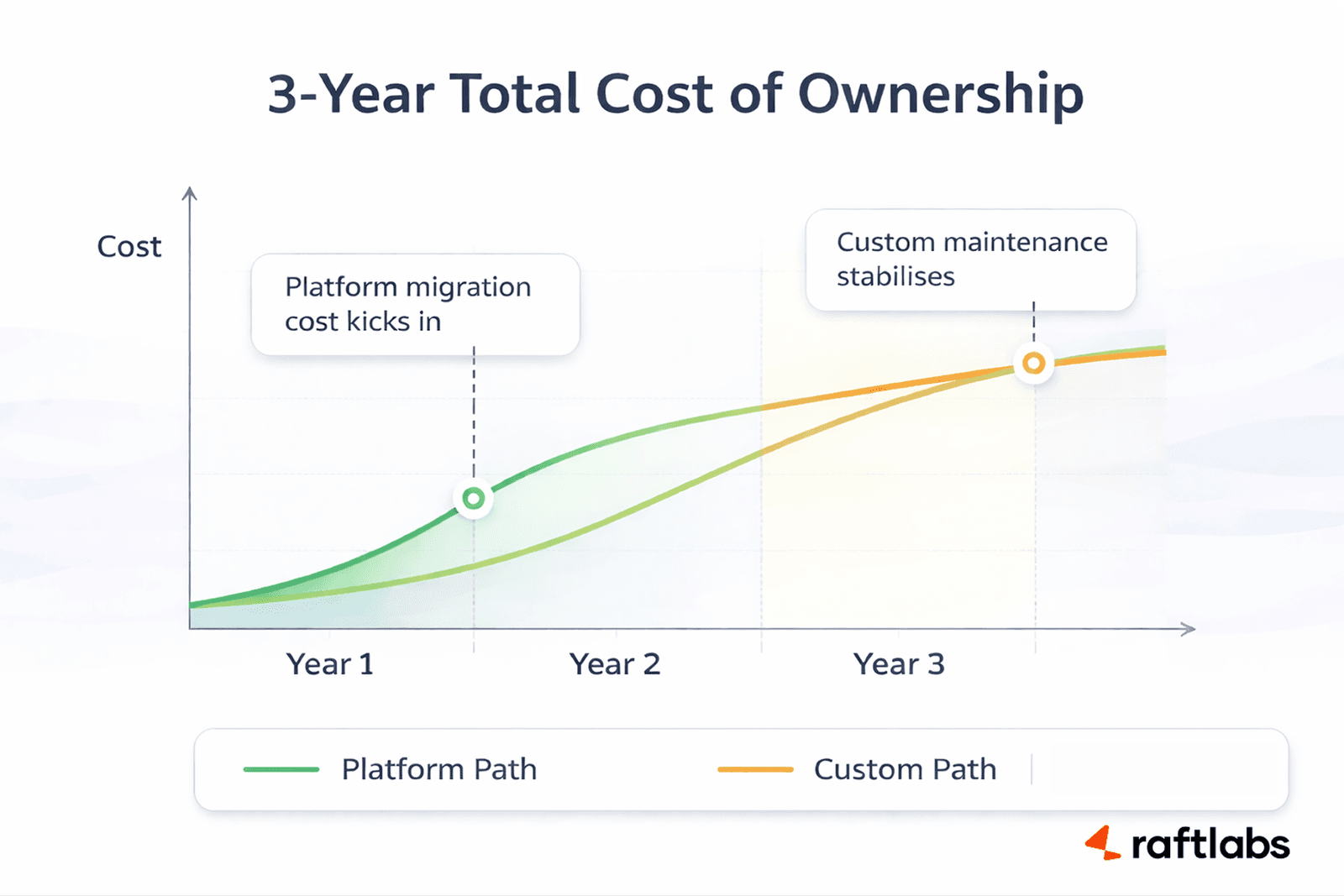

3-Year Total Cost of Ownership

Real AI agent costs are almost never what the pricing page says. Hidden costs on the platform side include overage fees, premium integrations, training time, and eventual migration costs when you outgrow what the platform offers. Hidden costs on the custom side include infrastructure at scale, security audits, developer time for updates, and slower time to market.

| Year | Platform Path | Custom Build Path |

|---|---|---|

| Year 1 | $18,000 (subscription + implementation) | $58,000 (build + infrastructure) |

| Year 2 | $18,000 (subscription + add-ons) | $19,000 (hosting + maintenance + features) |

| Year 3 | $58,000 (subscription + migration to custom) | $17,500 (hosting + maintenance + features) |

| 3-Year Total | $94,000–$134,000 | $94,500 |

The costs converge around year two to three, but the outcomes diverge sharply. After three years on a platform, you are typically stuck migrating to custom anyway, having spent platform fees and then migration costs on top. After three years on a custom build, you own a system tailored precisely to your workflows. If you are serious about AI agents as a long-term capability, custom breaks even faster than it appears.

Not sure whether to build or buy? Let's figure it out together. Every team's situation is different — volume, budget, timeline, and strategic importance all affect the right call. Get a free 30-minute consultation and walk away with a clear recommendation for your use case. Book a free consultation

AI Agent Architecture: What You Are Actually Building

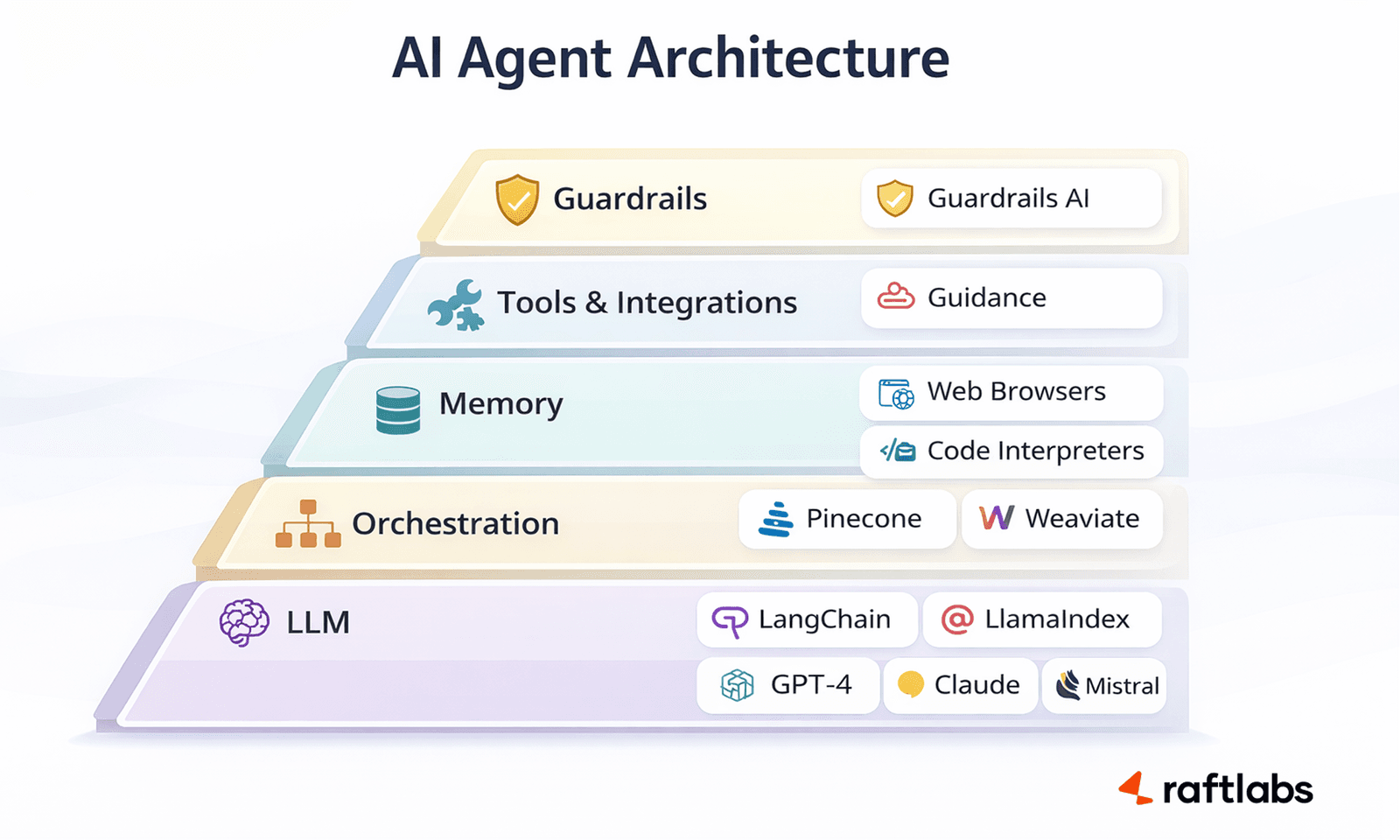

Whether you build or buy, understanding AI agent architecture helps you ask the right questions and make informed trade-offs. Every production-grade agent is composed of five layers.

The Language Model

The language model is the brain. It processes natural language and generates responses. Hosted API options include OpenAI GPT-4, Anthropic Claude, and Google Gemini. Self-hosted open-source options like Meta's Llama 3 or Mistral trade ease of use for lower per-call cost and stronger data control. The rule of thumb: hosted APIs are faster to integrate and best for most cases; self-hosted models make economic sense at very high volumes (one million or more requests per month) or when data residency requirements are strict.

The Orchestration Layer

The orchestration layer manages conversation flow, context, and tool usage. It routes user input to the right tool, maintains conversational context across turns, decides when to ask a clarifying question versus take an action, and handles errors and fallbacks. LangChain and LlamaIndex are the dominant open-source frameworks in 2026. Microsoft's Semantic Kernel is the choice for .NET environments.

Memory Systems

Agents need memory. Short-term or session memory tracks the current conversation. Long-term memory stores user preferences and history in your database. Retrieval memory uses vector databases such as Pinecone, Weaviate, or Chroma to give the agent access to your knowledge base, product documentation, or policy library.

This last type, powering retrieval-augmented generation, is what prevents hallucination on domain-specific questions, and it's one of the core reasons generative AI development for agents looks very different from a basic LLM integration.

Tools and Integrations

This is how agents take action. Internal tools query your database, update your CRM, and trigger internal workflows. External tools call payment processors, shipping carriers, and third-party APIs. Modern LLMs like GPT-4 and Claude support "function calling" or "tool use," meaning the model itself decides which tool to invoke and when based on the user's intent. This is what separates a real agent from a sophisticated chatbot.

Guardrails and Safety

AI agents make mistakes. Input validation catches malicious prompts, filters sensitive data, and handles out-of-scope requests. Output filtering prevents hallucinations from reaching users, validates critical factual claims, and moderates tone. Open-source options include Guardrails AI and NVIDIA's NeMo Guardrails. Most production builds also include custom validation logic for domain-specific risk.

| Category | Component | Purpose |

|---|---|---|

| Backend + Orchestration | FastAPI (Python) | API layer and request handling |

| LangChain | Orchestration, prompt chaining, RAG pipelines | |

| OpenAI GPT-4 | Core LLM for responses | |

| PostgreSQL | Stores user data and conversation logs | |

| Pinecone | Vector database for knowledge retrieval | |

| Redis | Session management and caching | |

| Infrastructure + Monitoring | AWS / Google Cloud | Cloud hosting and scalability |

| Docker | Containerization for consistent deployments | |

| GitHub Actions | CI/CD pipeline automation | |

| LangSmith | Observability and debugging LLM workflows | |

| Sentry | Error tracking and monitoring | |

| Cost Estimate | ~$600/month | Estimated cost at ~10K conversations |

Compare that roughly $600 per month to a platform's $1,500 to $2,000 per month for similar volume, and the economics of custom development at scale become clear.

Step-by-Step: How to Build an AI Agent

If you have decided that custom AI agent development is the right path, here is the realistic process. Not the optimistic version from a sales deck, but the actual timeline and activities from teams that have shipped production agents.

Phase 1: Discovery and Planning (2–3 Weeks)

Map user journeys and identify pain points. Define success metrics before writing a line of code. Prioritise use cases ruthlessly, starting with a single clear use case rather than trying to build everything at once. Design conversation flows. Identify every integration you will need. Choose your tech stack. The deliverable is a requirements document, conversation design mockups, a technical architecture diagram, and a cost and timeline estimate with realistic buffers.

The most common mistake at this phase is scope creep. Starting with "Handle order status inquiries" and executing it perfectly is worth far more than a bloated V1 that handles twelve use cases poorly.

Phase 2: MVP Development (6–10 Weeks)

Weeks one and two lay the foundation: infrastructure setup, authentication, a basic chat interface, and LLM connection. Weeks three and four implement core logic: conversation orchestration, memory systems, the tool and integration layer, and the first real data integration. Weeks five through seven add error handling, fallbacks, human escalation, logging, and monitoring. Weeks eight through ten cover user testing, edge case handling, performance optimization, and documentation.

Phase 3: Testing and Iteration (3–4 Weeks)

Alpha testing with an internal team, beta testing with select customers, load testing, and systematic conversation quality review. The key metrics to track from day one are containment rate (percentage of conversations handled without human help), task completion rate, customer satisfaction scores, escalation rate, and response accuracy.

The iteration loop is straightforward: review failed conversations, identify patterns, update prompts or improve tools, re-test, repeat. Most critical improvements in a new agent come from this process rather than architectural changes.

Phase 4: Launch and Continuous Improvement

Soft-launch to 10 to 20 percent of traffic and monitor closely for the first week. Fix critical issues before ramping to full traffic over two to four weeks. Post-launch, budget roughly five to ten hours per month for a stable agent: weekly transcript reviews, monthly metric analysis, quarterly capability expansion. The first three months will require more. After that, the overhead is manageable for a small team.

AI Agent Platform Comparison

For teams going the platform route, the choice of platform matters more than most product comparisons suggest. Here is what you need to know about the major options beyond their marketing pages.

No-Code Platforms

Zapier / Make.com excel at connecting existing apps with basic AI capabilities. Their integration libraries are enormous. But they are not built for complex, stateful multi-turn conversations. Use them for simple automation with AI-assisted steps, not for agents that need to hold context across a conversation and take multi-step actions.

Voiceflow is genuinely excellent as a design and prototyping tool. Visual conversation builders help teams align on what an agent should do before anyone writes code. As a production AI agent platform for high-volume deployments, it shows limitations. Treat it as a design tool, not a deployment platform.

Low-Code Platforms

Microsoft Copilot Studio makes strong sense if your business is already deeply embedded in the Microsoft ecosystem. Teams, Dynamics, and Office integrations are genuinely good. Outside that ecosystem, the friction increases significantly and often offsets the platform's convenience advantages.

Google Dialogflow sits in the most useful middle ground for technical teams: more flexibility than no-code platforms, less overhead than a full custom build. Advanced workflows still require custom code, but the baseline agent capability is solid and the pay-as-you-go pricing is transparent.

The Migration Problem

Many teams start on platforms, hit their limits, and migrate to custom. This transition is expensive in ways that are easy to underestimate. Conversation history is difficult to export cleanly from most platforms. The conversation design was built around platform constraints, requiring a redesign. Users are accustomed to the platform's UX, and transitions create confusion. The real cost of this path is platform fees plus migration costs plus the opportunity cost of rebuilding from scratch. If you expect to outgrow a platform within 12 to 18 months, building custom from the start is almost always cheaper overall.

AI Agents Working in Production: 3 Industry Benchmarks

Before examining what a custom build looks like in practice, it helps to understand what production-grade AI agents are actually delivering across different industries today. These are not proofs-of-concept. They are live systems with measurable outcomes.

Fintech AI Development: Klarna's Customer Service Agent

In early 2024, Klarna deployed an OpenAI-powered assistant that handled the workload of 700 agents in its first month.

By 2026, that experiment has matured into a definitive blueprint for the "Agentic Economy." What began as a customer service chatbot has evolved into an AI-first operating model, enabling Klarna to more than triple its revenue per employee while strategically reducing its workforce through AI-driven automation and natural attrition.

Today, Klarna operates as a global digital bank where over 85% of routine interactions are managed by autonomous agents. However, following a "reality check" in mid-2025 regarding complex customer disputes, Klarna pivoted to a VIP Hybrid Model. In this system, AI handles high-volume "noise," while a specialized network of human experts, including passionate "power users" hired via an Uber-style platform, focuses on high-nuance, high-empathy cases.

| Metric | 2024 (Initial Launch) | 2026 (Current Status) |

|---|---|---|

| Workload Equivalent | 700 Full-time agents | 850+ Full-time agents |

| Revenue per Employee | ~$400,000 | $1.3 Million (Up 3.6x since 2022) |

| Support Model | AI-First (Whitelist) | Hybrid "VIP" Human Triage |

| Workforce Size | ~5,000 employees | ~3,000 employees |

| Resolution Time | Under 2 minutes | ~2 mins (AI) / 12+ mins (Human VIP) |

| Profit Impact | $40M (Projected) | $65M Adjusted Operating Profit (FY25) |

| Market Valuation | $6.7B (Internal) | ~$15B (Post-2025 IPO) |

Customer satisfaction scores remained on par with human agents, a result that surprised even Klarna internally. The agent handles refunds, payment issues, cancellations, and account queries.

The key architectural decision: the agent only retrieves information from Klarna's own help center and customer account data, operating on a strict whitelist that prevents hallucination on financial details.

Now, Klarna had evolved to a hybrid model, the agent manages routine queries, human agents handle complex cases, a template that reflects how most mature deployments end up operating.

Finance: Morgan Stanley's Advisor Intelligence Suite

At enterprise scale, the problem shifts from 'can this work?' to 'can this work reliably across 16,000 users, 350,000 documents, and a compliance-sensitive industry?'

That's where enterprise AI development differs from a standard build.

Morgan Stanley Wealth Management built its AI agent suite with a specific goal: give 16,000+ financial advisors instant access to the firm's entire intellectual capital without making them dig through documents manually.

The AI @ Morgan Stanley Assistant, built on GPT-4, indexes over 350,000 proprietary research documents and answers natural-language questions in seconds. Before its deployment, advisors spent 30 or more minutes tracking down answers. After, the same query resolves in under a minute, and document retrieval efficiency jumped from 20 percent to 80 percent.

The AI @ Morgan Stanley Debrief, launched in mid-2024, handles meeting notes. With client consent, it transcribes advisor-client Zoom calls, extracts key action items, drafts follow-up emails, and pushes the summary directly into the client's Salesforce CRM profile. Advisors review and approve before anything is sent.

Measured Outcome: 98% Adoption Among Financial Advisor Teams

That is not a rollout metric. It is a signal of genuine utility. Morgan Stanley directly attributed a record $64 billion in net new assets in Q3 2024 and 100,000 new clients to the efficiency gains from these AI tools. Each advisor saves approximately 30 minutes of administrative time per client meeting, reallocated entirely to client-facing work.

E-Commerce: Shopify Sidekick

Shopify's Sidekick AI agent, embedded directly into the merchant admin, illustrates a different model: an agent that operates inside a platform to give non-technical business owners intelligence they could not access without a data analyst on staff.

Merchants can ask Sidekick natural-language questions like "Why are sales down this week?" or "Which products have the highest conversion rate in Q4?" and get answers drawn from their own store data in seconds. Beyond answering questions, Sidekick takes action: it creates discount campaigns, optimizes product pages, builds customer segments, and adjusts marketing settings, all from a conversational interface, with merchant approval before anything goes live.

The numbers from Shopify's own data tell the adoption story: orders from AI-assisted search increased 15x between January 2025 and January 2026. Merchants using Sidekick report saving 30 to 45 minutes per day on administrative tasks. For a solo operator or a small team, that is meaningful capacity freed for customer relationships and growth.

Across the above three examples, the pattern is consistent: agents that connect to real data, operate within defined boundaries, and hand off gracefully to humans when complexity demands it outperform agents built for maximum autonomy.

Teams who want something between a scripted bot and a full autonomous agent often find conversational AI development is the right middle ground, goal-driven, but with tighter guardrails than a fully autonomous system.

Our Work: AI Voice Agents for Interview Research

These enterprise deployments illustrate what is possible at scale. But some of the most instructive AI agent work happens at the product level, where a small team sets out to solve a specific problem that existing platforms simply cannot handle.

A USA Today bestselling author and behavioral strategist, approached us with a clear brief: transform his text-based interview platform into a voice-first system that could reach anyone with a phone. The problem with the existing platform was structural. Text surveys require internet access, typing skills, and a willingness to fill out forms, all barriers that suppress completion rates and exclude the people whose voices matter most in research.

The goal was an AI agent that could call recipients directly, conduct a full multi-turn interview over the phone in natural conversation, and deliver richer insights than any text survey could capture. Three platform options were assessed. None could combine outbound telephony, voice AI capable of adaptive conversation, and the reporting depth the project required. A custom build was the only path.

The technical foundation used Twilio for global outbound calling and SMS delivery, ElevenLabs Voice Agents for natural-sounding AI interviews that adapt their questions based on responses, AWS Lambda for the serverless backend handling concurrent calls without latency, and PostgreSQL for real-time data management. The agent does not just transcribe, it performs sentiment analysis, keyword tracking, and feature-level reporting on every completed call, generating actionable intelligence without the interviewer reviewing each transcript manually.

The call management dashboard tracks every interview in real time: completed, failed, and pending. For failed attempts like no answer, busy signal, network drop, the system offers one-click retry or automatic SMS fallback with a web interview link.

The AI insights bot lets researchers ask natural-language questions directly about their data: "What are the top pain points mentioned?" or "Summarize feedback about the onboarding process?"

Timeline: 12 weeks from discovery to production.

Stack: ReactJS, AWS Serverless Lambda, PostgreSQL, ElevenLabs, Twilio.

Result: A platform that reaches anyone with a phone, delivers 6x deeper insights than text surveys, and makes feedback available with zero delay the moment each interview ends.

If your use case involves phone-based outreach or voice-first interfaces, AI voicebot development is a different discipline from text agents, the latency tolerances, fallback logic, and telephony integration all change the architecture."

The central lesson of the project reflects the broader pattern visible in the Klarna and Morgan Stanley deployments: platform limitations become expensive precisely where your use case gets interesting.

Outbound voice calling, adaptive conversation flow, and sentiment-level reporting were always going to require a custom build. Identifying that early rather than three months into a platform evaluation is what determines whether an AI agent project delivers value or becomes a sunk cost.

Decision Matrix: Which Path Is Right for You

Six questions will determine the right approach for your situation.

| Question | Platform Signal | Custom Signal |

|---|---|---|

| Monthly volume | 300–1,000 interactions | 5,000+ interactions |

| Use case uniqueness | Standard: support, booking, FAQs | Proprietary workflows, complex logic |

| Timeline | Need it in 4 weeks | Can wait 3–4 months |

| Budget | Under $10,000 first year | $30,000–$60,000+ available |

| Dev resources | No in-house developers | Full team or development partner |

| Strategic importance | Nice-to-have tool | Competitive differentiator or core feature |

For 80 percent of companies in the evaluation phase, the hybrid approach delivers the best risk-adjusted outcome. Validate with a platform for two to three months. Build custom with those insights for three to four months. The total timeline is six to seven months, but you enter the custom build with real data and zero guesswork about what users actually need.

Common Challenges and How to Overcome Them

1. The Agent Keeps Getting It Wrong

The root causes are almost always unclear or conflicting prompts, insufficient training examples, or hallucination on domain-specific facts. The fixes are equally straightforward: sharpen the system prompt to state explicitly what the agent should and should not do, connect the agent to a knowledge base via retrieval-augmented generation so it cites facts rather than generating them, and use function calling to structure critical outputs rather than letting the model improvise.

Before vs After: Prompt Engineering

BEFORE (vague):

"Help users with their orders."

AFTER (specific):

"You are a customer service agent. Your ONLY job is to:

- Look up order status by order number

- Provide tracking information

- Handle cancellations if order has not shipped

If user asks about returns, refunds, or technical issues,

escalate to human agent immediately. Never make up order

information. Always use get_order_status tool."

2. Users Do Not Trust the AI

Be upfront that users are talking to an AI. Show competence immediately on the first question. Make human escalation easy and visible. Offer a concrete incentive for staying with the agent when wait times are relevant: "I can answer this right now, or transfer you — the team is currently about eight minutes out."

3. Costs Scale Faster Than Value

This is a prompt engineering and model-selection problem. GPT-4 costs roughly $30 per million tokens. GPT-3.5 costs roughly $0.50. At 100,000 conversations per month, the difference is substantial. Use cheaper models for simple FAQ-style tasks and reserve GPT-4 or Claude for the complex reasoning cases that genuinely require it. Implement caching for common responses. Optimize prompt length. At very high volumes, self-hosted models become economically compelling.

4. Integration Is Harder Than Expected

Legacy systems without APIs are the hardest integration challenge. The solution is usually a lightweight API wrapper that translates between the agent's tool calls and the legacy system's interface. Webhooks handle real-time updates where direct API calls are not feasible. When a specific action is not yet supported, the agent should escalate gracefully: "I cannot do that in this conversation, but I have notified the team." Ship an imperfect integration before shipping nothing.

The Real Question

The companies winning with AI agents are not using them to cut costs as the primary goal. They are using them to scale without chaos, to deliver instant responses where eight-minute wait times used to lose customers, and to free their teams from password resets and status checks so they can solve problems that actually require human judgment.

The decision is not whether to invest in AI agent development. It is which path to invest in given your specific situation: a platform to validate and learn quickly, custom development for full control and long-term advantage, or a hybrid that starts with one and migrates to the other once you know exactly what to build.

None of these paths are wrong. Picking the wrong one for your situation is where the waste comes from. The framework in this guide exists to help you avoid that waste.

The technology works. The economics make sense at reasonable volume. The only question remaining is whether you build your advantage before your competitors build theirs.

Ready to Build an AI Agent That Delivers Real Value? Get a free consultation and cost estimate tailored to your use case. No black boxes, no guesswork. Get a free consultation

Frequently Asked Questions

- Basic AI agents cost $20,000-$40,000 to build, covering core functionality with 2-3 integrations. Complex agents with advanced features, HIPAA compliance, or extensive custom development cost $40,000-$80,000+. Ongoing costs run $400-$1,200/month for infrastructure and LLM API usage. Total 3-year cost of ownership typically ranges from $30,000-$70,000 for custom versus $20,000-$110,000 for platform solutions depending on usage volume.

- MVP development takes 8-12 weeks from initial consultation to deployment. Basic agents with simple workflows and limited integrations can be built in 8 weeks. Production-grade agents with multiple integrations, compliance requirements, and advanced features require 12-14 weeks. Enterprise implementations with extensive security, multi-system integration, and custom model training can take 16-24 weeks.

- Yes, but it requires rebuilding from scratch. Platforms don't export orchestration logic or fine-tuned models. You'll need to reimplement conversation flows, tool integrations, and prompts in your custom stack. Budget 8-12 weeks for migration plus the cost of running both systems in parallel during transition. Data (conversation logs, user preferences) usually migrates cleanly. To ease migration, start with platform validation, then build custom when limitations become expensive.

- Chatbots respond to user messages but require humans to execute actions. AI agents perceive their environment, make autonomous decisions, and take actions to achieve goals without human intervention. Example: A chatbot tells you how to reset your password. An AI agent resets your password, logs the action, updates your account, and emails confirmation, all without human involvement. If it requires human review before every action, it's a chatbot, not an agent.

- Senior full-stack developers can build basic AI agents without dedicated AI expertise. Prompt engineering is the primary skill (teaching LLMs how to behave), which is closer to copywriting than machine learning. For advanced features like custom model fine-tuning, RAG with vector databases, or multi-agent orchestration, you'll need ML/AI experience. Most teams start with LangChain and GPT-5.2, which existing developers can handle. Add AI specialists only when moving to custom models or scaling beyond basic implementations.

- LLM API usage scales with conversation volume (budget $200-$800/month for moderate scale). Infrastructure costs (hosting, databases, vector search) run $150-$500/month. Ongoing development for bug fixes and feature additions costs $3,000-$8,000/year. Security and compliance audits add $5,000-$15,000 for HIPAA or SOC 2 requirements. Model retraining or optimization costs $2,000-$5,000 annually. Knowledge transfer if developers leave requires 2-4 weeks of documentation and training. Budget 20-30% of initial development cost annually for maintenance.

- Use OpenAI/Anthropic APIs when starting out, faster to prototype, better performance out-of-the-box, and no infrastructure management. Switch to open-source models (Llama, Mixtral) when: (1) LLM API costs exceed $2,000/month and volume justifies infrastructure investment, (2) data can't leave your servers due to compliance, (3) you need full control over model behavior through fine-tuning, or (4) you want to avoid vendor lock-in long-term. Infrastructure cost for self-hosting starts at $500-$1,000/month for GPU instances.

- Implement retrieval-augmented generation (RAG) to ground responses in factual documents. Use strict system prompts that define boundaries and constraints. Add output validation that checks responses against ground truth before sending. Implement confidence scoring and escalate to humans when confidence is low. Set up guardrails that filter toxic or off-topic content. Log all outputs and human override rates to identify problematic patterns. Include few-shot examples of correct behavior in prompts. For critical systems, use two-model validation (second LLM reviews first LLM's output).

- Break-even depends on conversation volume and complexity. For simple customer support at $0.15/conversation platform cost versus $35,000 custom build + $400/month infrastructure: break-even occurs at ~8,000-10,000 conversations/month, typically 8-12 months after launch. For complex workflows where platforms require expensive add-ons or extensive workarounds, break-even happens sooner. Calculate: (Custom development cost) / (Monthly platform cost - Monthly custom infrastructure cost) = Months to break-even. If volume is predictable and growing, custom economics improve over time.

- Yes, but architecture matters. For highly sensitive data: use open-source models hosted on your infrastructure (no data leaves your servers). For moderate sensitivity: use OpenAI API with their business tier (data not used for model training) or Azure OpenAI (deployed in your tenant). For HIPAA compliance: sign Business Associate Agreements (BAAs) with vendors or self-host entirely. Implement encryption at rest and in transit. Log all data access for audit trails. Most platforms (Intercom, Zendesk) don't meet enterprise compliance requirements, another reason to build custom for regulated industries.